Orchestra

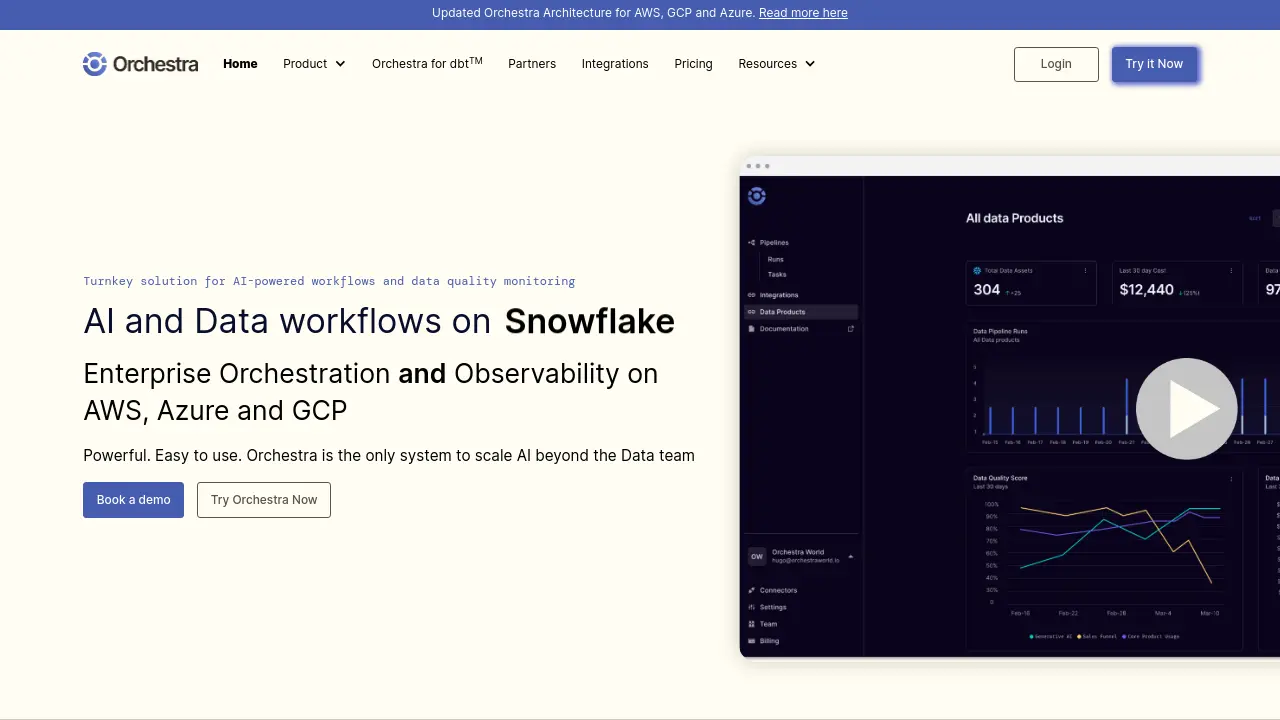

Turnkey solution for AI-powered workflows and data quality monitoring

Description

Orchestra provides a comprehensive solution for managing and scaling AI-powered workflows and ensuring data quality. It enables enterprises to turn platforms like Snowflake, Databricks, and BigQuery into end-to-end Data and AI Product Platforms, offering robust orchestration and observability across AWS, Azure, and GCP environments. The system is designed to be both powerful for data engineers and intuitive enough for business users, facilitating the scaling of AI initiatives beyond centralized data teams.

With its declarative workflow framework, Orchestra simplifies the creation and maintenance of data pipelines, allowing users to build with code or through a user-friendly interface. It focuses on proactive monitoring, alerting teams to pipeline failures and data quality issues to build trust with stakeholders. By centralizing metadata and providing clear lineage, Orchestra helps organizations improve cost efficiency, debug issues rapidly, and empower domain teams with reliable data workflows without significant overhead.

Key Features

- AI-Driven Workflows: Automate complex data processes and AI model pipelines with intelligent orchestration.

- Enterprise Data Orchestration: Manage and schedule data pipelines across Snowflake, Databricks, BigQuery, AWS, GCP, and Azure.

- Observability and Data Quality: Proactively monitor pipeline health and data quality, with alerts for failures and anomalies.

- Declarative Workflow Framework: Build and manage data pipelines using a simple, declarative approach suitable for both engineers and business users.

- Extensive Integrations: Natively connect with over 100 data tools, including Fivetran, dbt Core™, Airbyte, Python, and major cloud services.

- Code or UI Pipeline Building: Flexibility to construct and manage workflows through a programmatic interface or an intuitive graphical UI.

- Centralized Metadata and Lineage: Consolidate metadata and visualize asset-based data lineage for improved cost control and debugging.

- Serverless Architecture: Benefit from a fully managed, serverless platform offering predictable, fixed pricing.

- Role-Based Access Control (RBAC): Implement fine-grained access controls for enhanced security and data governance.

- Instant Debugging Capabilities: Quickly identify and resolve pipeline issues with accessible lineage and logs.

Use Cases

- Transforming Snowflake into an end-to-end Data and AI Product Platform.

- Implementing enterprise-wide data orchestration and observability on AWS, Azure, and GCP.

- Scaling AI and data workflows beyond central platform teams to domain-specific teams.

- Proactively monitoring data infrastructure for pipeline failures and data quality degradation.

- Reducing total cost of ownership (TCO) for data pipelines through efficient management.

- Building modular, cross-cloud data architectures for complex tasks.

- Enabling rapid development and deployment of data and AI workflows.

- Improving data trust and reliability with stakeholders through proactive issue resolution.

You Might Also Like

GIGABYTE

Contact for PricingConsumer and Enterprise Hardware Solutions for Gaming, AI, and High-Performance Computing

Autoblogging.pro

FreemiumAutomate Your Blog with ChatGPT AI

SMMRY

FreemiumTransform lengthy content into concise summaries effortlessly.

Duda

OtherThe Professional Website Builder for Agencies and SaaS Platforms

Baserow

FreemiumThe open source Airtable alternative for data collaboration and application building.